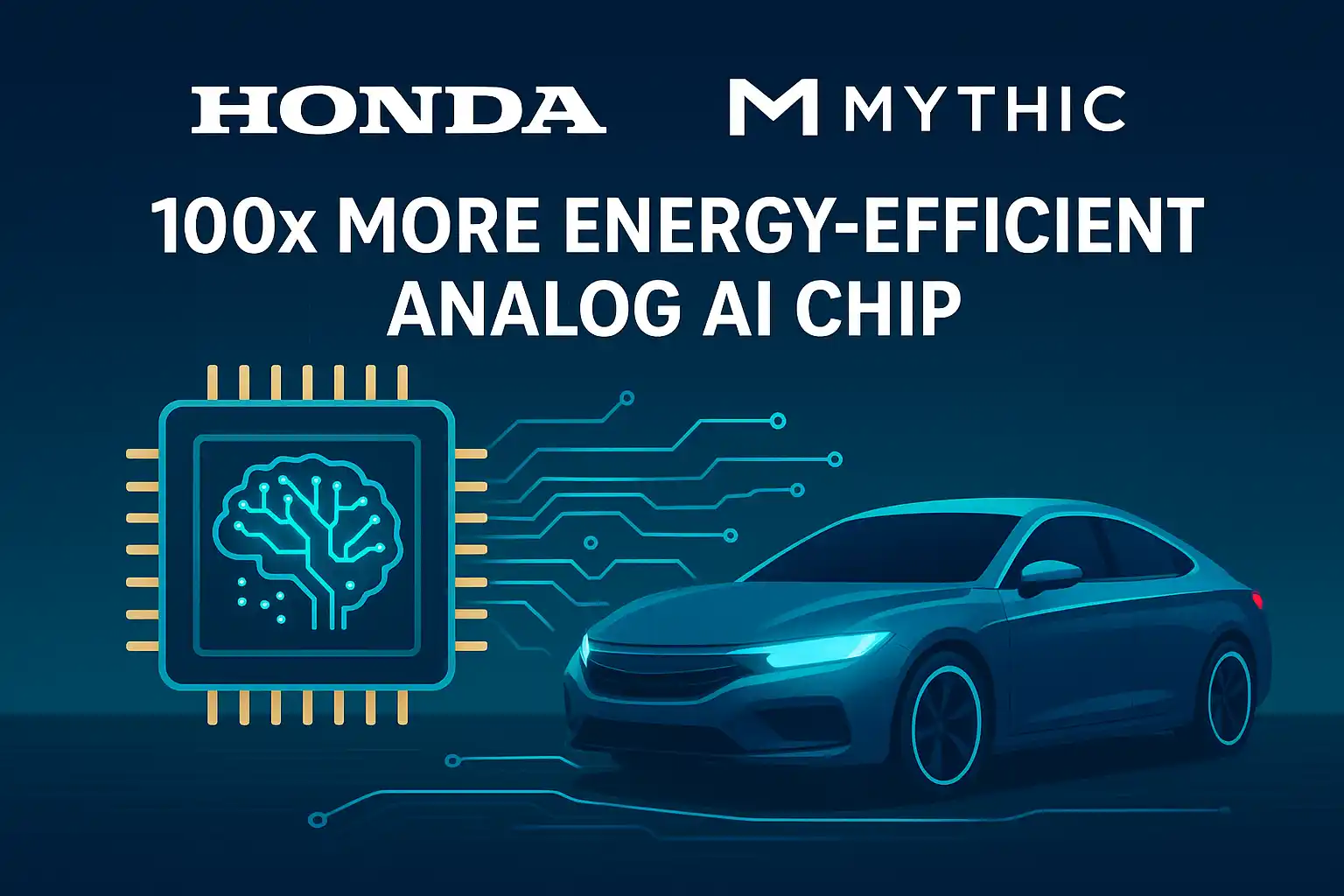

Microsoft has taken a major step in the AI hardware space with the launch of Maia 200, a new AI accelerator chip designed specifically for inference workloads. The chip will be deployed across Microsoft’s Azure cloud data centers, helping power services such as Copilot, OpenAI models, and other large-scale AI applications with higher speed and lower cost.

With Maia 200, Microsoft is strengthening its position in the rapidly growing AI infrastructure market and reducing its dependence on third-party AI chips.

What Is Maia 200?

Maia 200 is a custom-built AI inference accelerator developed by Microsoft.

Its primary role is to efficiently run trained AI models in real-world applications — such as answering user queries, generating text, or supporting enterprise AI tools.

In simple terms:

- Training teaches an AI model

- Inference is when the model is actually used

Maia 200 is purpose-built for the second task.

Why Did Microsoft Build Maia 200?

As AI adoption accelerates globally, demand for faster and more cost-efficient AI computing has surged. Until now, most cloud providers have relied heavily on chips from Nvidia or other third-party vendors.

With Maia 200, Microsoft aims to:

- Lower AI infrastructure costs on Azure

- Improve performance for large language models

- Gain tighter control over its AI hardware roadmap

- Compete directly with Amazon (Trainium), Google (TPU), and Nvidia

Advanced Technology Behind Maia 200

Maia 200 is built using some of the most advanced semiconductor technologies available today.

🔹 Cutting-Edge Manufacturing

- Manufactured on TSMC’s 3nm process

- Contains approximately 140 billion transistors

🔹 High-Speed Memory

- 216 GB of HBM3e memory

- Up to 7 TB/s memory bandwidth

- Designed to handle large AI models smoothly and efficiently

🔹 AI-Optimized Architecture

- Native support for FP4 and FP8 tensor operations

- Optimized for low-latency, high-throughput inference tasks

Performance Capabilities

According to Microsoft:

- Maia 200 delivers 10+ petaFLOPS (FP4) and around 5 petaFLOPS (FP8)

- Provides up to 30% better performance-per-dollar compared to competing solutions

- Outperforms rival inference chips from Amazon and Google in key workloads

This means faster AI responses at lower operational costs for cloud customers.

Impact on Azure and OpenAI Services

Maia 200 will directly benefit:

- Azure AI services

- Microsoft Copilot

- OpenAI-powered workloads running on Azure

For users and enterprises, this translates into:

- Faster AI responses

- Improved scalability

- Reduced cloud computing costs

Deployment Status

- Maia 200 is already live in select Azure data centers in the United States

- Global rollout across additional Azure regions is planned in the coming months

Microsoft has also released a developer SDK preview, enabling developers to optimize AI models for Maia 200 using familiar frameworks.

Microsoft Enters the AI Chip Race

With the launch of Maia 200, Microsoft officially joins the elite group of companies building their own AI accelerators, alongside:

- Nvidia

- Amazon

Industry experts believe custom AI chips will play a crucial role in shaping the future of cloud computing, as AI becomes central to enterprise and consumer services alike.

Outcome

Maia 200 is more than just a new chip — it represents Microsoft’s long-term strategy to dominate AI infrastructure. By delivering faster inference, lower costs, and tighter integration with Azure, Maia 200 strengthens Microsoft’s position in the global AI race.

As AI usage continues to grow, custom accelerators like Maia 200 are expected to define how efficiently and affordably AI services are delivered worldwide.

Source: Microsoft blog